Nvidia Shares Recipe to Accelerate AI Cloud Adoption

In March, Nvidia revealed blueprints for a new open source Tesla GPU-based accelerator – HGX-1 – developed for clouds with Microsoft under its Project Olympus. Turns out HGX-1 was actually the first embodiment of Nvidia’s HGX reference architecture and partner program. Today Nvidia announced that is partnering with major ODMs — Foxconn, Inventec, Quanta and Wistron – to give them early access to the HGX design and Nvidia GPU computing technologies.

The program will enable original design manufacturers (ODMs) to “more quickly design and bring to market a wide range of qualified GPU-accelerated systems for hyperscale datacenters,” Nvidia said. “Through the program, Nvidia engineers will work closely with ODMs to help minimize the amount of time from design win to production deployments.”

“It’s kind of a basic recipe,” said Rob Ober, Tesla Chief Platform Architect at Nvidia, of the HGX reference platform. “What we’ve done is given the cookbook for optimizing the placement of the GPUs, distributing the power, routing the NVLinks and getting timing closure.

“We’re making it available because frankly it’s really hard stuff to get right, and there are certain core parts of the design that there are benefits to having it the same across different vendors. By standardizing it not only do we make it easier for the ODMs to implement we make it more streamlined for the entire software stock to be able to rely on what’s there.”

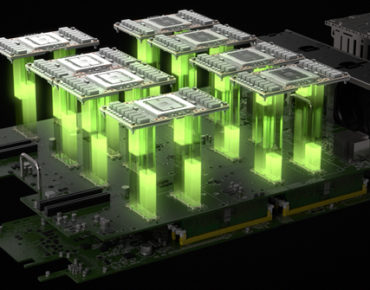

The HGX architecture (shown right) includes eight Nvidia Tesla P100 GPUs connected in a cube mesh using Nvidia NVLink high-speed interconnects and PCIe topologies. The upcoming Volta GPU will be drop-in compatible.

DGX-1, HGX-1 and Big Basin from Facebook are all based on this reference architecture; they all employ exactly the same board. Ober characterized Big Basin as the “first straight use of the reference,” while Microsoft made some physical changes with HGX-1. They moved where the slots are and they slightly tweaked the PCI switch complex, said Ober, but “the important thing is logically, it’s identical,” he told us. “So to all the software and even to the host node it looks exactly the same, that’s really the critical part. So there are ways to innovate on that, especially around physical packaging but we’re trying to keep it logically the same.”

As noted above, Nvidia’s own DGX-1 “deep learning supercomputer” is also an implementation of the HGX reference platform. It “literally has the reference platform in it,” said Ober, adding: “DGX-1 is first and foremost an appliance. It’s a solution if somebody needs to get the highest performance deep learning platform in the world and they don’t have the wherewithal to assemble their own systems, which is by the way a very hard thing. The only people who would use HGX-1 are effectively creating their own systems.” Like Microsoft, for example, which can bond the HGX-1 with one of its standard servers and plug in its own software infrastructure.

Hyperscalers, cloud giants and OEMs can start with the reference design and innovate on the enclosures, power supplies, cooling, server nodes that are attached to the cabling, etc. DGX-1, Big Basin and HGX-1 are the public versions, but Nvidia expects others to follow. Ober explained the HGX partner program is a way to manage “tremendous demand” and enable the “software platform to grow out seamlessly.”

Hyperscalers, cloud giants and OEMs can start with the reference design and innovate on the enclosures, power supplies, cooling, server nodes that are attached to the cabling, etc. DGX-1, Big Basin and HGX-1 are the public versions, but Nvidia expects others to follow. Ober explained the HGX partner program is a way to manage “tremendous demand” and enable the “software platform to grow out seamlessly.”

“Through this new partner program with Nvidia, we will be able to more quickly serve the growing demands of our customers, many of whom manage some of the largest data centers in the world,” echoed Taiyu Chou, general manager of Foxconn/Hon Hai Precision Ind Co., Ltd., and president of Ingrasys Technology Inc. “Early access to Nvidia GPU technologies and design guidelines will help us more rapidly introduce innovative products for our customers’ growing AI computing needs.”

The HGX-1 platform is expected to debut in the Azure cloud. Although we don’t have confirmation on when that will be, Ober said that both HGX-1 and Big Basin are “pre-production but very close.” Further, Nvidia characterized HGX as “the ideal reference architecture for cloud providers seeking to host the newly announced Nvidia GPU Cloud,” so expect more there.

During his keynote at Nvidia’s annual technology conference earlier this month, Nvidia CEO Jensen Huang said that Volta will come to DGX-1 in Q3 with OEM availability in Q4. Beyond that Nvidia would not disclose how it is strategizing ramp-up of Volta production, but Ober emphasized compatibility of the Volta across HGX platforms. Immediate upgrades will be possible on all HGX-class systems when the Volta V100 GPU becomes available later this year.

“Quite literally you can drop a Volta in there and you will get on the order of three times the deep learning training performance bump,” said Ober. “We are setting the stage to really accelerate the adoption curve on Volta. Everybody wants that, we want it, and we’re trying to make it really easy.”

Related

With over a decade’s experience covering the HPC space, Tiffany Trader is one of the preeminent voices reporting on advanced scale computing today.